HarvardSites UAT Program

Building a user acceptance testing practice from the ground up

Role: Lead UX Researcher & UAT Lead

Scope: Research operations, process design, quality assurance, cross-functional collaboration

THE PROBLEM

When I returned from my holiday break in January 2025, I had a message waiting from the lead engineer. While I was away, ownership of user acceptance testing for the HarvardSites Drupal platform had been assigned to me. There was no formal briefing or handoff. Just a new responsibility and a sprint already in progress. I had to get up to speed fast.

UAT had previously been handled by the engineering team, the same team responsible for building and technically validating the work. That's a meaningful conflict of interest. The people closest to the code are rarely the right people to assess whether something works for the humans who will actually use it. But without a dedicated owner, there had been no one else to do it.

UAT amongst the HarvardSites product team had existed in some form before I stepped in, but it had never been formally defined. There was no written process, no clear criteria for what qualified for testing, no documented ownership, and no consistent approach to reporting findings back to the team. Stories moved through sprints and got released without a shared understanding of who was responsible for validating them, what "passing UAT" actually meant, or what happened when something didn't pass. The work was getting done but it was held together by informal communication and individual judgment rather than any repeatable system.

That's the environment I inherited. And the question I had to answer quickly was whether to simply start testing things and figure it out as I went, or to stop first and build something that was repeatable and scalable.

THE CONSTRAINTS

There was no documentation to inherit, no process to adapt, and no clear model for how UAT should work. I was starting from a blank page, which meant the first challenge wasn't doing the work, it was defining what the work was.

The HWP product team operates on a two-week sprint cycle with release windows that don't move. UAT has a hard cutoff being the last Wednesday before the end of each sprint. Any process I introduced had to fit inside that cadence without creating new bottlenecks or pulling people away from their primary responsibilities.

Because the role was assigned rather than established, there was no pre-existing organizational trust in UAT as a formal function. The process had to demonstrate its value through consistency and outcomes.

DECISION POINTS

Decision #1: Document the process before scaling the work

What I noticed

The instinct when you're handed an ambiguous responsibility is to start doing things and to pick up wherever the last person left off and figure it out. But I didn't have a last person to pick up from. What I had was a vague directive and a sprint already underway.

What became clear was that the informal, judgment-based approach that had existed before wasn't scalable. UAT depended entirely on whoever was doing it already knowing the right questions to ask: which stories to test, how to report findings, what counted as a blocker, what happened after approval. There was no documentation, no shared criteria, no process anyone else could pick up.

The choice we made

Before I started testing anything, I spent time with the engineering lead to map out how UAT actually worked: where stories came from, what the release window looked like, who owned what at each step. From that conversation, I started building a process document, not as a formal deliverable, but as a live artifact I could update as decisions were made. The first version was sparse. But it was a start.

Why that choice was made

A process that lives in someone's head is one absence away from not existing. Documentation was the only way to make UAT durable, and to give the team something concrete to push back on, improve, and eventually own. The process document also became the mechanism through which every subsequent decision in this case study was captured and communicated. Without it, the program wouldn't have had a foundation to build on.

Decision #2: Create a formal distinction between standard and advanced UAT

What I noticed

Early on, UAT was a blanket approach. A single button fix and a major multi-workflow component launch went through the same process. Smaller stories got more scrutiny than they needed and higher-stakes ones didn't get enough.

The weekly retrospectives made it clear that some fixes just needed one person to confirm something worked as described. Others needed multiple people, from different teams, stress-testing before it went out to thousands of sites.

The choice I made

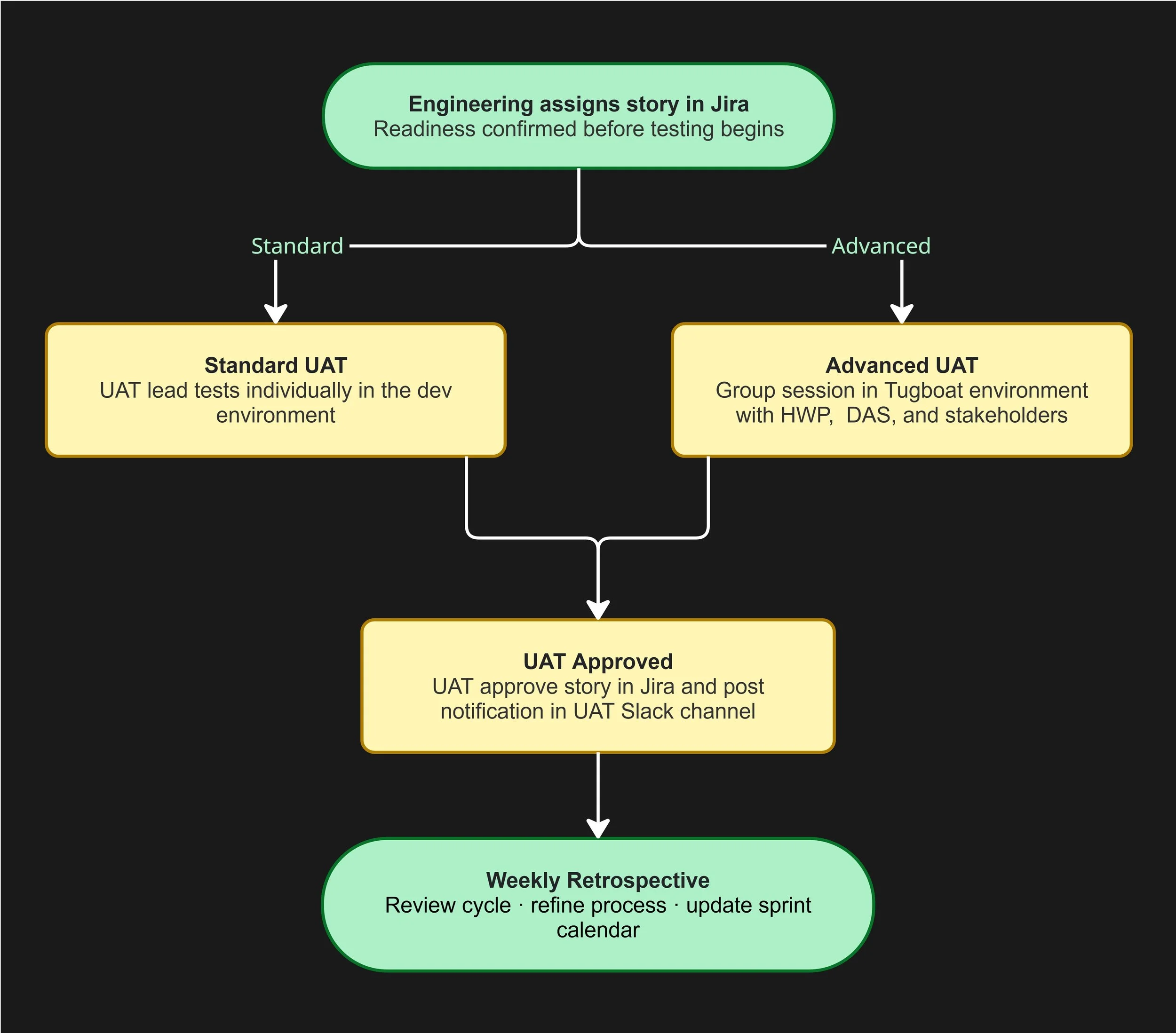

The solution was a two-track model. Standard UAT covers individual stories tested by the UAT Lead in the dev environment. Advanced UAT is reserved for high-impact or complex work where features touch multiple workflows, requiring cross-team validation. It runs in a Tugboat environment with structured sessions drawing in participants from the HarvardSites product team, Harvard's Digital Accessibility Services, and relevant stakeholders. To make the distinction operational, I worked with the engineering lead on a matrix to define when a story warrants the advanced track.

Why that choice was made

Treating every story the same became a resource problem and a quality control problem. The two-track model I designed allowed standard UAT to be lean and fast to meet sprint deadlines, while also giving the team a formalized mechanism to invest more effort where the risk was higher. It also made UAT a more credible and reliable function that was designed around our work rather than applied everywhere regardless of the need. On top of that, the criteria matrix kept the decision from being arbitrary.

Decision #3: Clarify the boundary between quality assurance and UAT

What I noticed

As the UAT program matured, a different problem surfaced. Engineering, design, and product didn't always have a shared understanding of where quality assurance (QA) ended and UAT began. QA and UAT were being used interchangeably in some conversations, and that ambiguity created confusion. Stories would reach UAT with unresolved technical issues that should have been caught earlier. Feedback from UAT sessions was sometimes treated as a signal for more QA rather than a UX and business needs signal.

The confusion wasn't anyone's fault. The two functions had evolved side by side without a formal conversation about how they were different or how they were supposed to work together.

The choice I made

I brought the question to a cross-functional meeting that included engineering, product, design, and the senior project manager. Rather than arriving with a proposed answer, I opened with the question, does the current system work? From that conversation, we landed on a shared definition. QA owns the technical piece. This encompasses determining if it functions, meets the acceptance criteria, and avoids regressions. UAT owns business and UX acceptance. This encompasses determining if it serves the actual user need, alligns with the expected workflow, and meets stakeholder expectations.

Why that choice was made

This was important because without it, UAT was absorbing work that wasn't its responsibility and sometimes missing the work that was. Clarifying the boundary defined and refined the scope of UAT. The team left that meeting with a shared vocabulary that made handoffs cleaner and reduced late-stage confusion that could impact releases deadlines. It also positioned UAT as a distinct and valued function and not a QA afterthought.

OUTCOMES

What started as an undefined responsibility handed off without context became a structured, documented, team-wide practice that has continued to evolve with the platform and our team.

A living process document, updated continuously with versioned change history

A two-track UAT model (standard and advanced) with clear criteria, defined roles, and tooling integration in Jira

A sprint/release calendar that made UAT windows visible to the entire product team

Advanced UAT sessions completed for major feature launches including design and functionality releases

A shared observations template and agenda format that made advanced UAT sessions consistent and repeatable

A clear organizational boundary between QA and UAT

Role responsibilities formally split, with the senior project manager leading standard UAT, and the UX Research Lead advanced UAT

The program became stable enough to transfer, which I argue is the clearest measure of whether a process was built with other people in mind

REFLECTION

The thing about inheriting an undefined role is that there is little to nothing to source from. There's no "how we've always done it" to fall back on. It can feel uncomfortable at first but it also means every decision you make is a design decision and the program you build reflects the thinking you put into it.

What I kept coming back to throughout this work was the same question I ask in any research or designs: who is this for, and what do they actually need? In this case, the users of the UAT process were engineers, designers, product managers, and stakeholders. We’re talking about people with full schedules and, understandably, limited patience for process overhead. Every decision I made was filtered through that lens. Does this make the process clearer? Does it reduce ambiguity? Does it make it easier for someone else to do this work when I'm not available?

The fact that standard UAT has now been handed off to the senior project manager is a relevant outcome. A process that can only be run by the person who built it isn't a process at all, but a dependency.